LLMs often show a peculiar behavior where the first token in a sequence draws unusually high attention—known as an “attention sink.” Despite seemingly unimportant, this token frequently dominates attention across many heads in Transformer models. While prior research has explored when and how attention sinks occur, the reasons behind their emergence and functional role remain unclear. These attention patterns are linked to challenges and optimization in LLMs, such as quantization, key-value caching, streaming attention, and even security vulnerabilities, highlighting their significance and the need for deeper understanding.

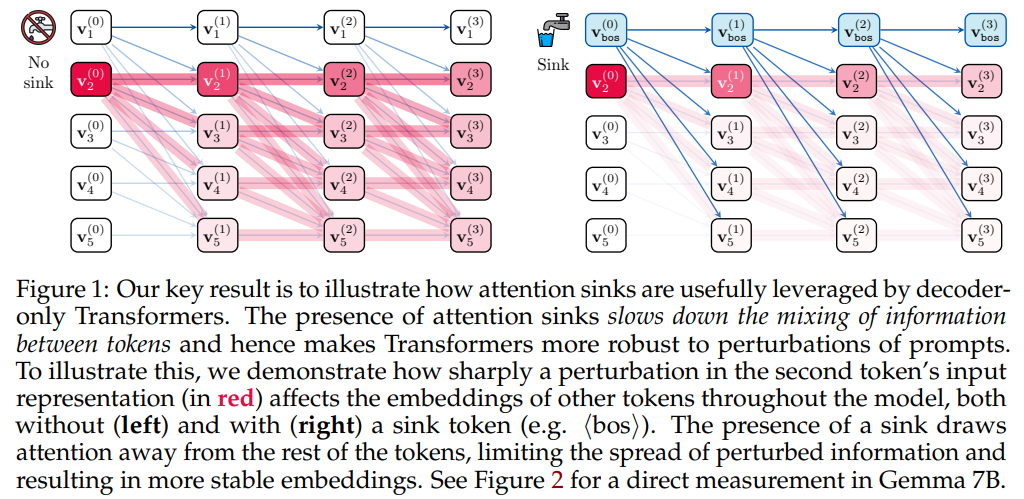

Researchers from the University of Oxford, NUS, and Google DeepMind explored why attention sinks—where models focus heavily on the first token—emerge in LLMs. Contrary to past efforts to reduce them, they argue that these sinks serve a functional role by preventing over-mixing of token representations, which can lead to collapse or instability in deep Transformers. The ⟨bos⟩ token often attracts the majority of attention, limiting the spread of perturbations and stabilizing the model. Experiments on models like Gemma 7B and LLaMa 3.1 405B confirm that attention sinks become more prominent in deeper models and longer contexts, supporting their theory.

The study explores how decoder-only Transformers, the architecture behind most modern language models, use attention mechanisms to process sequences token by token. In such models, each token can only attend to past tokens due to causal masking. A recurring phenomenon in these models is the emergence of “attention sinks”—tokens like the beginning-of-sequence (⟨bos⟩) that disproportionately attract attention across multiple heads and layers. While these sinks were previously seen as artifacts of large key and query activations, this work argues that they are vital in maintaining stable representations, especially in long sequences. By concentrating attention, sinks prevent excessive mixing of information across layers, helping to preserve the uniqueness of token representations.

The study connects attention sinks to problems like rank collapse and over-squashing, which degrade model performance by compressing diverse inputs into indistinct representations. It uses mathematical tools like Jacobian norms to show how attention sinks reduce sensitivity to perturbations, effectively acting as stabilizers that prevent representational collapse. Experiments on models like Gemma 7B confirm that removing attention sinks increases information diffusion, while their presence maintains sharper, more localized attention patterns. Thus, attention sinks are not just a side effect but a structural feature that supports the Transformer’s ability to handle deep and long-range dependencies.

The study investigates whether the beginning-of-sequence (⟨bos⟩) token holds any special role in forming attention sinks in language models. Through a series of experiments using different data packing and masking strategies, the researchers find that attention sinks consistently form at the first token of the input, whether or not it is explicitly marked as ⟨bos⟩. However, when ⟨bos⟩ is fixed at the start of every sequence during pretraining, the model learns to rely on it more heavily to stabilize attention and prevent over-mixing of token representations. Removing ⟨bos⟩ during inference in such models leads to a collapse in sink formation and a significant drop in performance. This highlights that although the first token always plays a role in anchoring attention, the training setup—especially the consistent presence of ⟨bos⟩—greatly strengthens this effect.

In conclusion, the study argues that attention sinks are a structural solution to challenges like over-squashing and excessive mixing in deep Transformers. Directing attention toward the initial token—typically ⟨bos⟩—helps the model reduce its sensitivity to input noise and retain distinct token representations over long contexts. The findings also show that context length, model depth, and training configurations significantly affect how and where sinks form. By offering theoretical insights and empirical validation, the work presents attention sinks not as quirks but as components contributing to large language models’ stability and efficiency.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, feel free to follow us on Twitter and don’t forget to join our 85k+ ML SubReddit.

The post Unveiling Attention Sinks: The Functional Role of First-Token Focus in Stabilizing Large Language Models appeared first on MarkTechPost.

Source: Read MoreÂ

[Register Now] miniCON Virtual Conference on OPEN SOURCE AI: FREE REGISTRATION + Certificate of Attendance + 3 Hour Short Event (April 12, 9 am- 12 pm PST) + Hands on Workshop [Sponsored]

[Register Now] miniCON Virtual Conference on OPEN SOURCE AI: FREE REGISTRATION + Certificate of Attendance + 3 Hour Short Event (April 12, 9 am- 12 pm PST) + Hands on Workshop [Sponsored]