Challenges in Localized Captioning for Vision-Language Models

Describing specific regions within images or videos remains a persistent challenge in vision-language modeling. While general-purpose vision-language models (VLMs) perform well at generating global captions, they often fall short in producing detailed, region-specific descriptions. These limitations are amplified in video data, where models must account for temporal dynamics. Primary obstacles include a loss of fine-grained detail during visual feature extraction, insufficient annotated datasets tailored for regional description, and evaluation benchmarks that penalize accurate outputs due to incomplete reference captions.

Describe Anything 3B—A Model Tailored for Localized Descriptions

This AI work from NVIDIA presents Describe Anything 3B (DAM-3B), a multimodal large language model purpose-built for detailed, localized captioning across images and videos. Accompanied by DAM-3B-Video, the system accepts inputs specifying regions via points, bounding boxes, scribbles, or masks and generates contextually grounded, descriptive text. It is compatible with both static imagery and dynamic video inputs, and the models are publicly available via Hugging Face.

Core Architectural Components and Model Design

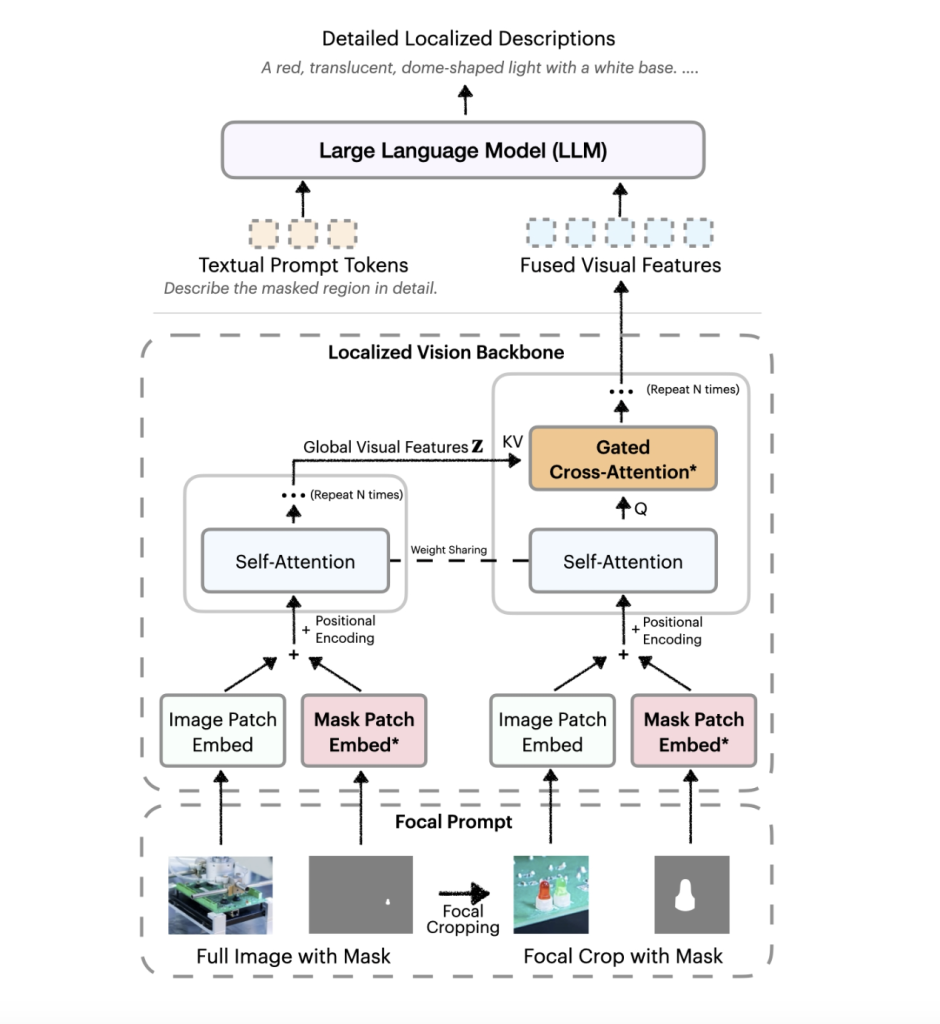

DAM-3B incorporates two principal innovations: a focal prompt and a localized vision backbone enhanced with gated cross-attention. The focal prompt fuses a full image with a high-resolution crop of the target region, retaining both regional detail and broader context. This dual-view input is processed by the localized vision backbone, which embeds the image and mask inputs and applies cross-attention to blend global and focal features before passing them to a large language model. These mechanisms are integrated without inflating token length, preserving computational efficiency.

DAM-3B-Video extends this architecture to temporal sequences by encoding frame-wise region masks and integrating them across time. This allows region-specific descriptions to be generated for videos, even in the presence of occlusion or motion.

Training Data Strategy and Evaluation Benchmarks

To overcome data scarcity, NVIDIA develops the DLC-SDP pipeline—a semi-supervised data generation strategy. This two-stage process utilizes segmentation datasets and unlabeled web-scale images to curate a training corpus of 1.5 million localized examples. Region descriptions are refined using a self-training approach, producing high-quality captions.

For evaluation, the team introduces DLC-Bench, which assesses description quality based on attribute-level correctness rather than rigid comparisons with reference captions. DAM-3B achieves leading performance across seven benchmarks, surpassing baselines like GPT-4o and VideoRefer. It demonstrates strong results in keyword-level (LVIS, PACO), phrase-level (Flickr30k Entities), and multi-sentence localized captioning (Ref-L4, HC-STVG). On DLC-Bench, DAM-3B achieves an average accuracy of 67.3%, outperforming other models in both detail and precision.

Conclusion

Describe Anything 3B addresses longstanding limitations in region-specific captioning by combining a context-aware architecture with a scalable, high-quality data pipeline. The model’s ability to describe localized content in both images and videos has broad applicability across domains such as accessibility tools, robotics, and video content analysis. With this release, NVIDIA provides a robust and reproducible benchmark for future research and sets a refined technical direction for the next generation of multimodal AI systems.

Check out the Paper, Model on Hugging Face and Project Page. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. Don’t Forget to join our 90k+ ML SubReddit.

The post NVIDIA AI Releases Describe Anything 3B: A Multimodal LLM for Fine-Grained Image and Video Captioning appeared first on MarkTechPost.

Source: Read MoreÂ

[Register Now] miniCON Virtual Conference on AGENTIC AI: FREE REGISTRATION + Certificate of Attendance + 4 Hour Short Event (May 21, 9 am- 1 pm PST) + Hands on Workshop

[Register Now] miniCON Virtual Conference on AGENTIC AI: FREE REGISTRATION + Certificate of Attendance + 4 Hour Short Event (May 21, 9 am- 1 pm PST) + Hands on Workshop