Sparse attention is emerging as a compelling approach to improve the ability of Transformer-based LLMs to handle long sequences. This is particularly important because the standard self-attention mechanism, central to LLMs, scales poorly with sequence length—its computational cost grows quadratically during the prefilling phase, increasing time-to-first-token and making deployment expensive. During the decoding phase, dense attention leads to a cache that expands linearly with the sequence length, resulting in significant memory bandwidth usage for accessing key-value pairs. These inefficiencies pose substantial challenges for both long-context modeling and scaling at inference time.

Sparse attention attempts to reduce this computational burden by approximating dense attention using only a subset of key-query pairs. This has the potential to significantly accelerate long-sequence processing and reduce memory requirements, while still preserving model accuracy. However, despite its promise, sparse attention has yet to be thoroughly evaluated at scale. Existing studies have only scratched the surface, often focusing on limited model sizes, restricted sequence lengths, and specific applications such as multi-turn dialogue. Furthermore, the datasets used in these studies usually vary in length, making it difficult to analyze how performance scales with longer sequences. As a result, the practical viability and robustness of sparse attention strategies remain underexplored.

Researchers from the University of Edinburgh, Cohere, and Meta conducted an extensive evaluation of training-free sparse attention methods across various model sizes, sequence lengths, and sparsity levels. Their study involved nine long-context tasks, including new natural language-based benchmarks designed for controlled and realistic testing. Key findings reveal that for long sequences, large, sparse models outperform smaller, dense ones under fixed computational budgets. While higher sparsity is more tolerable during decoding, no single sparse strategy works universally across tasks. They also introduce scaling laws for sparse attention and release standardized implementations to support reproducible research and guide informed deployment decisions.

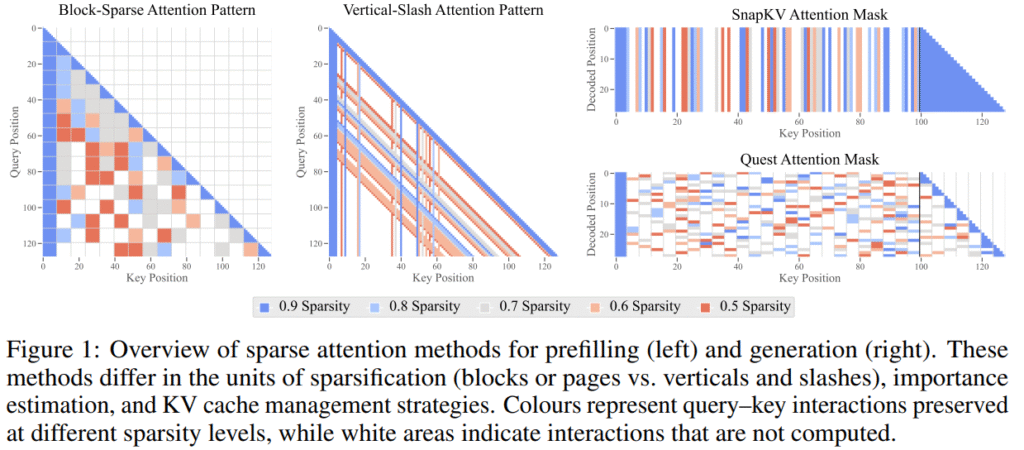

Sparse attention aims to reduce computational and memory costs in Transformers by selectively computing only important query–key interactions. This helps speed up full-sequence “prefilling” and reduce memory load during “decoding.” Key techniques include selecting which parts of the attention matrix to retain (e.g., blocks, windows), estimating importance using fixed or dynamic patterns, and allocating computational budgets either uniformly or adaptively across layers and heads. For decoding, methods either evict less useful key–value pairs to conserve memory or maintain the full cache and load only the necessary parts, balancing speed, memory efficiency, and information retention during generation.

The study investigates sparse attention methods in long-context models, analyzing performance under fixed computational budgets. At shorter sequence lengths (32k tokens), smaller dense models perform more efficiently, while at longer lengths (128k), larger sparse models are preferable. Compression tolerance varies by model size and task, with larger models maintaining performance even at 20× sparsity. However, some tasks remain sensitive to high compression. No single method consistently excels; chunk-based methods, such as Quest, perform best in decoding, while Vertical-Slash works well in prefilling for simple tasks. A log-linear scaling law effectively predicts accuracy trends across model size, sequence length, and compression ratio.

In conclusion, the study presents a comprehensive evaluation of sparse attention methods across various model sizes (up to 72 billion parameters), sequence lengths (up to 128 kilobytes), and sparsity levels (up to 95%) on diverse long-sequence tasks. It finds that, under fixed compute (isoFLOPS), large sparse models outperform smaller dense ones for long contexts. While high sparsity (10–15×) can retain accuracy, performance drops significantly on some tasks even at moderate compression. The best sparsity strategy varies by task and phase (prefilling versus decoding), highlighting the absence of a universal solution. The authors also propose reliable scaling laws, suggesting sparse attention is promising but requires careful, task-specific application.

Check out the Paper. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. Don’t Forget to join our 90k+ ML SubReddit.

The post Exploring the Sparse Frontier: How Researchers from Edinburgh, Cohere, and Meta Are Rethinking Attention Mechanisms for Long-Context LLMs appeared first on MarkTechPost.

Source: Read MoreÂ

[Register Now] miniCON Virtual Conference on AGENTIC AI: FREE REGISTRATION + Certificate of Attendance + 4 Hour Short Event (May 21, 9 am- 1 pm PST) + Hands on Workshop

[Register Now] miniCON Virtual Conference on AGENTIC AI: FREE REGISTRATION + Certificate of Attendance + 4 Hour Short Event (May 21, 9 am- 1 pm PST) + Hands on Workshop