Equipping LLMs with external tools or functions has become popular, showing great performance across diverse domains. Existing research depends on synthesizing large volumes of tool-use trajectories through advanced language models and SFT to enhance LLMs’ tool-calling capability. The critical limitation lies in the synthetic datasets’ inability to capture explicit reasoning steps, resulting in superficial tool call training. In many cases, reasoning is either completely omitted during the training or deferred to inference through prompting techniques. This results in pseudo-reasoning: models merely learn to mimic surface-level patterns without truly understanding the underlying decision-making process.

Existing research explores multiple approaches to enhance LLMs’ tool-use capabilities. Previous methods have focused on two key strategies for improving tool learning. The first approach concentrated on dataset curation and model refinement, involving the creation of large-scale supervised datasets and applying advanced training techniques such as SFT and DPO reinforcement learning. LLMs are combined with various external tools, including search engines, calculators, vision tools, and Python interpreters, to expand their functional capabilities. The second approach targeted reasoning improvement, shifting from traditional train-time scaling to more complex test-time scaling strategies. Earlier methods relied on step-level supervision and learned reward models to guide reasoning trajectories.

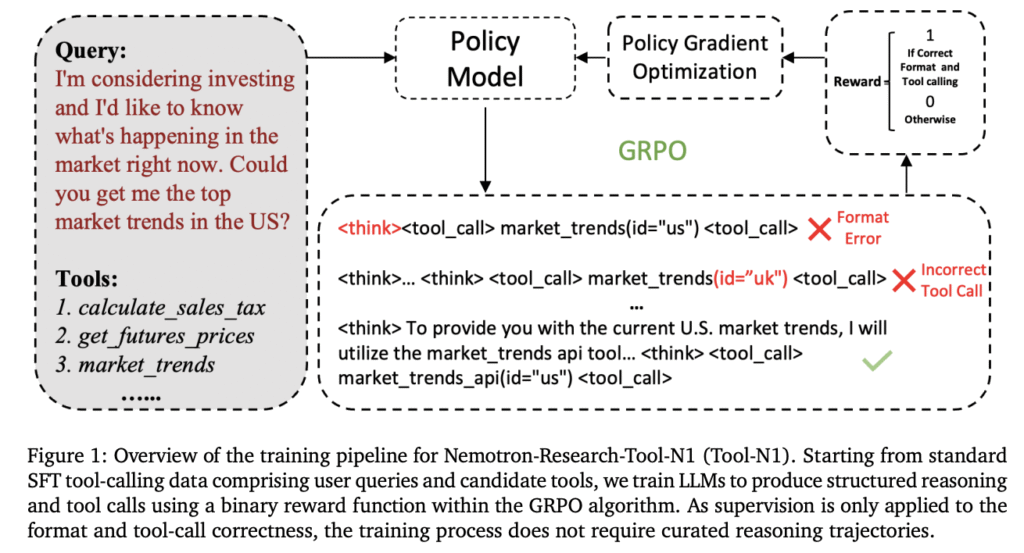

Researchers from NVIDIA, Pennsylvania State University, and the University of Washington have proposed the Nemotron-Research-Tool-N1 series to address the limitations of existing tool-use methods. It diverges from traditional SFT and reasoning trace distillation techniques by implementing a unique RL paradigm. Drawing inspiration from DeepSeek-R1’s success, a lightweight supervision method has been developed to focus on the structural validity and functional correctness evaluation of tool invocations. The Nemotron-Research-Tool-N1 model employs a binary reward mechanism that enables the model to autonomously develop reasoning strategies without relying on explicitly annotated reasoning trajectories.

Researchers unify and preprocess data from existing tool-calling datasets, xLAM, and a subset of ToolACE, which provide single-turn and multi-turn synthetic tool-calling trajectories. A lightweight prompting template is created to guide tool call generation, featuring explicit instructions for intermediate reasoning within <think>…</think> tags and tool invocation enclosed in <tool_call>…</tool_call>. The template helps to minimize rigid formatting constraints and reduce the risk of overfitting to specific prompt patterns. The primary backbone model utilized is Qwen2.5-7B/14B-Instruct, and to evaluate the generalization ability of the proposed method, evaluations are performed on alternative backbone models, including multiple variants from the LLaMA family.

Results on the BFCL and API-Bank benchmarks show Nemotron-Research-Tool-N1 models’ superior performance. On the BFCL benchmark, the Tool-N1-7B/14B models outperform closed-source models like GPT-4o and specialized fine-tuned models such as xLAM-2-70B and ToolACE-8B. The models surpass SFT baselines trained on identical data sources, highlighting the effectiveness of the R1-style RL approach. Further, the API-Bank benchmark validates these findings, with Tool-N1-7B/14B achieving 4.12% and 5.03% higher accuracy than GPT-4o. These results conclusively demonstrate the potential of the proposed method in enhancing large language models’ tool-calling capabilities through a novel reinforcement learning paradigm.

In conclusion, researchers introduced Nemotron-Research-Tool-N1, a significant advancement in LLM tool-use capabilities. The research shows a paradigm shift from traditional SFT methodologies by introducing a novel rule-based RL approach. The proposed method enables models to develop sophisticated reasoning strategies without relying on explicitly annotated reasoning trajectories. Benchmark evaluations across BFCL and API-Bank consistently validate the approach’s effectiveness, showing substantial performance improvements over existing baselines. The findings open new avenues for developing more adaptable and intelligent language models that can autonomously generate reasoning strategies.

Check out the Paper and GitHub Page. All credit for this research goes to the researchers of this project. Also, feel free to follow us on Twitter and don’t forget to join our 90k+ ML SubReddit.

Here’s a brief overview of what we’re building at Marktechpost:

- ML News Community – r/machinelearningnews (92k+ members)

- Newsletter– airesearchinsights.com/(30k+ subscribers)

- miniCON AI Events – minicon.marktechpost.com

- AI Reports & Magazines – magazine.marktechpost.com

- AI Dev & Research News – marktechpost.com (1M+ monthly readers)

- Partner with us

The post Reinforcement Learning, Not Fine-Tuning: Nemotron-Tool-N1 Trains LLMs to Use Tools with Minimal Supervision and Maximum Generalization appeared first on MarkTechPost.

Source: Read MoreÂ