Policy gradient methods have significantly advanced the reasoning capabilities of LLMs, particularly through RL. A key tool in stabilizing these methods is Kullback-Leibler (KL) regularization, which discourages drastic changes between the current policy and the reference policy. While widely used in algorithms like PPO, there’s still much to explore in how different KL variants, such as Forward KL, Reverse KL, and their unnormalized form, can be estimated and applied within loss functions. These choices, along with various gradient estimators and on-policy vs. off-policy settings, shape training stability and performance in nuanced and underexplored ways.

Fine-tuning LLMs with human feedback is crucial for building aligned AI systems. Two main strategies are employed: optimizing with reward models using policy gradient methods, such as PPO, and directly training on human preferences through methods like Direct Preference Optimization (DPO). While PPO stabilizes training with reward models, DPO and its variants use pairwise comparisons to simplify and scale learning, gaining popularity in recent models. Reinforcement learning is also increasingly used to enhance LLM reasoning, especially in complex tasks like math and coding. New methods aim to reduce computational costs and improve training stability, often by replacing value networks or modifying KL penalties.

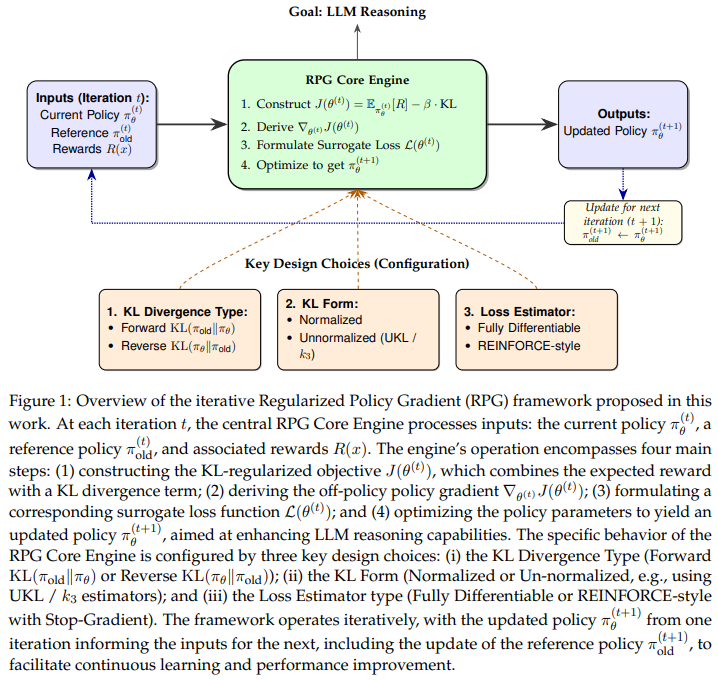

Researchers from UCLA, Tsinghua University, and Shanghai Qi Zhi introduce Regularized Policy Gradient (RPG), a unified framework for KL-regularized policy gradients in online reinforcement learning. They derive policy gradients and surrogate loss functions using both Forward and Reverse KL divergences, addressing normalized and unnormalized policies. RPG supports both fully differentiable objectives and REINFORCE-style estimators, tailored for off-policy training with importance sampling. The study also identifies and addresses theoretical issues in existing methods, such as GRPO, and examines KL regularization in REINFORCE++. Experiments on LLM reasoning tasks demonstrate that RPG achieves improved stability and performance compared to leading baselines, including GRPO, REINFORCE++, and DAPO.

The study presents policy gradient methods that incorporate KL divergence regularization in both online and off-policy settings using importance sampling from an older policy. For forward KL, the gradient involves importance-weighted rewards and a regularization term, with its loss resembling the maximum likelihood loss when the rewards are zero. The unnormalized forward KL adds a correction for mismatched distribution masses. Similarly, reverse KL and its unnormalized form penalize deviation from the reference policy, modifying the reward based on log-probability ratios. All approaches share a REINFORCE-like gradient structure, enabling alternative implementations using the stop-gradient operator, which supports stable and efficient optimization in practice.

The researchers conducted a thorough evaluation of their proposed RPG methods—both differentiable and REINFORCE-style—by comparing them to several established baselines on complex math reasoning tasks using Qwen2.5 language models. They trained on the DAPO-Math-17k dataset and evaluated performance using benchmarks such as AMC23 and AIME. RPG variants consistently demonstrated strong accuracy, training stability, and efficient memory usage. Implementation utilized the Verl framework and techniques such as KL regularization, PPO-style clipping, and Schedule-Free AdamW for smoother optimization. RPG models generally outperformed others in reward shaping, entropy control, and response length, highlighting their robustness and suitability for stable, high-performance learning.

In conclusion, RPG is a comprehensive framework for designing and analyzing policy gradient methods that incorporate KL-regularization in online, off-policy reinforcement learning. They explore a range of configurations, including both forward and reverse KL divergences, normalized and unnormalized policy distributions, and two types of estimators: fully differentiable and REINFORCE-style. RPG aims to provide a structured approach to understanding and implementing these variations. Applied to reasoning tasks with large language models, the proposed methods demonstrate more stable training and competitive or improved performance compared to established baselines, such as GRPO, REINFORCE++, and DAPO.

Check out the Paper and GitHub Page . All credit for this research goes to the researchers of this project. Also, feel free to follow us on Twitter and don’t forget to join our 95k+ ML SubReddit and Subscribe to our Newsletter.

The post Off-Policy Reinforcement Learning RL with KL Divergence Yields Superior Reasoning in Large Language Models appeared first on MarkTechPost.

Source: Read MoreÂ