Amazon operations span the globe, touching the lives of millions of customers, employees, and vendors every day. From the vast logistics network to the cutting-edge technology infrastructure, this scale is a testament to the company’s ability to innovate and serve its customers. With this scale comes a responsibility to manage risks and address claims—whether they involve worker’s compensation, transportation incidents, or other insurance-related matters. Risk managers oversee claims against Amazon throughout their lifecycle. Claim documents from various sources grow as the claims mature, with a single claim consisting of 75 documents on average. Risk managers are required to strictly follow the relevant standard operating procedure (SOP) and review the evolution of dozens of claim aspects to assess severity and to take proper actions, reviewing and addressing each claim fairly and efficiently. But as Amazon continues to grow, how are risk managers empowered to keep up with the growing number of claims?

In December 2024, an internal technology team at Amazon built and implemented an AI-powered solution as applied to data related to claims against the company. This solution generates structured summaries of claims under 500 words across various categories, improving efficiency while maintaining accuracy of the claims review process. However, the team faced challenges with high inference costs and processing times (3–5 minutes per claim), particularly as new documents are added. Because the team plans to expand this technology to other business lines, they explored Amazon Nova Foundation Models as potential alternatives to address cost and latency concerns.

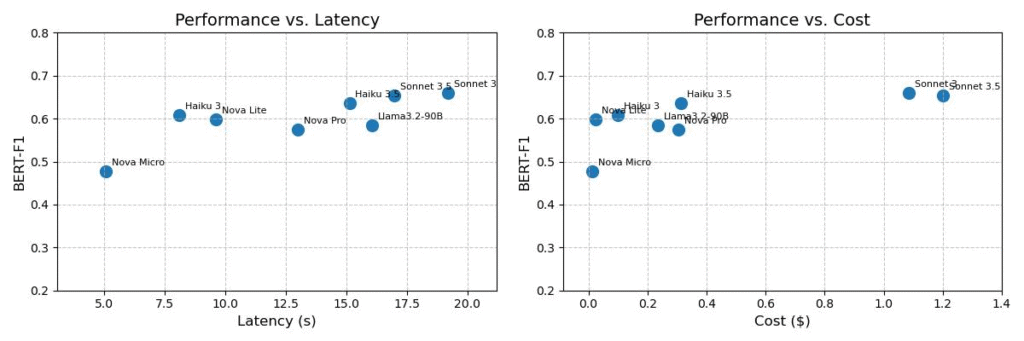

The following graphs show performance compared with latency and performance compared with cost for various foundation models on the claim dataset.

The evaluation of the claims summarization use case proved that Amazon Nova foundation models (FMs) are a strong alternative to other frontier large language models (LLMs), achieving comparable performance with significantly lower cost and higher overall speed. The Amazon Nova Lite model demonstrates strong summarization capabilities in the context of long, diverse, and messy documents.

Solution overview

The summarization pipeline begins by processing raw claim data using AWS Glue jobs. It stores data into intermediate Amazon Simple Storage Service (Amazon S3) buckets, and uses Amazon Simple Queue Service (Amazon SQS) to manage summarization jobs. Claim summaries are generated by AWS Lambda using foundation models hosted in Amazon Bedrock. We first filter the irrelevant claim data using an LLM-based classification model based on Nova Lite and summarize only the relevant claim data to reduce the context window. Considering relevance and summarization requires different levels of intelligence, we select the appropriate models to optimize cost while maintaining performance. Because claims are summarized upon arrival of new information, we also cache the intermediate results and summaries using Amazon DynamoDB to reduce duplicate inference and reduce cost. The following image shows a high-level architecture of the claim summarization use case solution.

Although the Amazon Nova team has published performance benchmarks across several different categories, claims summarization is a unique use case given its diversity of inputs and long context windows. This prompted the technology team owning the claims solution to investigate further with their own benchmarking study. To assess the performance, speed, and cost of Amazon Nova models for their specific use case, the team curated a benchmark dataset consisting of 95 pairs of claim documents and verified aspect summaries. Claim documents range from 1,000 to 60,000 words, with most being around 13,000 words (median 10,100). The verified summaries of these documents are usually brief, containing fewer than 100 words. Inputs to the models include diverse types of documents and summaries that cover a variety of aspects in production.

According to benchmark tests, the team observed that Amazon Nova Lite is twice as fast and costs 98% less than their current model. Amazon Nova Micro is even more efficient, running four times faster and costing 99% less. The substantial cost-effectiveness and latency improvements offer more flexibility for designing a sophisticated model and scaling up test compute to improve summary quality. Moreover, the team also observed that the latency gap between Amazon Nova models and the next best model widened for long context windows and long output, making Amazon Nova a stronger alternative in the case of long documents while optimizing for latency. Additionally, the team performed this benchmarking study using the same prompt as the current in-production solution with seamless prompt portability. Despite this, Amazon Nova models successfully followed instructions and generated the desired format for post-processing. Based on the benchmarking and evaluation results, the team used Amazon Nova Lite for classification and summarization use cases.

Conclusion

In this post, we shared how an internal technology team at Amazon evaluated Amazon Nova models, resulting in notable improvements in inference speed and cost-efficiency. Looking back on the initiative, the team identified several critical factors that offer key advantages:

- Access to a diverse model portfolio – The availability of a wide array of models, including compact yet powerful options such as Amazon Nova Micro and Amazon Nova Lite, enabled the team to quickly experiment with and integrate the most suitable models for their needs.

- Scalability and flexibility – The cost and latency improvements of the Amazon Nova models allow for more flexibility in designing sophisticated models and scaling up test compute to improve summary quality. This scalability is particularly valuable for organizations handling large volumes of data or complex workflows.

- Ease of integration and migration – The models’ ability to follow instructions and generate outputs in the desired format simplifies post-processing and integration into existing systems.

If your organization has a similar use case of large document processing that is costly and time-consuming, the above evaluation exercise shows that Amazon Nova Lite and Amazon Nova Micro can be game-changing. These models excel at handling large volumes of diverse documents and long context windows—perfect for complex data processing environments. What makes this particularly compelling is the models’ ability to maintain high performance while significantly reducing operational costs. It’s important to iterate over new models for all three pillars—quality, cost, and speed. Benchmark these models with your own use case and datasets.

You can get started with Amazon Nova on the Amazon Bedrock console. Learn more at the Amazon Nova product page.

About the authors

Aitzaz Ahmad is an Applied Science Manager at Amazon, where he leads a team of scientists building various applications of machine learning and generative AI in finance. His research interests are in natural language processing (NLP), generative AI, and LLM agents. He received his PhD in electrical engineering from Texas A&M University.

Aitzaz Ahmad is an Applied Science Manager at Amazon, where he leads a team of scientists building various applications of machine learning and generative AI in finance. His research interests are in natural language processing (NLP), generative AI, and LLM agents. He received his PhD in electrical engineering from Texas A&M University.

Stephen Lau is a Senior Manager of Software Development at Amazon, leads teams of scientists and engineers. His team develops powerful fraud detection and prevention applications, saving Amazon billions annually. They also build Treasury applications that optimize Amazon global liquidity while managing risks, significantly impacting the financial security and efficiency of Amazon.

Stephen Lau is a Senior Manager of Software Development at Amazon, leads teams of scientists and engineers. His team develops powerful fraud detection and prevention applications, saving Amazon billions annually. They also build Treasury applications that optimize Amazon global liquidity while managing risks, significantly impacting the financial security and efficiency of Amazon.

Yong Xie is an applied scientist in Amazon FinTech. He focuses on developing large language models and generative AI applications for finance.

Yong Xie is an applied scientist in Amazon FinTech. He focuses on developing large language models and generative AI applications for finance.

Kristen Henkels is a Sr. Product Manager – Technical in Amazon FinTech, where she focuses on helping internal teams improve their productivity by leveraging ML and AI solutions. She holds an MBA from Columbia Business School and is passionate about empowering teams with the right technology to enable strategic, high-value work.

Kristen Henkels is a Sr. Product Manager – Technical in Amazon FinTech, where she focuses on helping internal teams improve their productivity by leveraging ML and AI solutions. She holds an MBA from Columbia Business School and is passionate about empowering teams with the right technology to enable strategic, high-value work.

Shivansh Singh is a Principal Solutions Architect at Amazon. He is passionate about driving business outcomes through innovative, cost-effective and resilient solutions, with a focus on machine learning, generative AI, and serverless technologies. He is a technical leader and strategic advisor to large-scale games, media, and entertainment customers. He has over 16 years of experience transforming businesses through technological innovations and building large-scale enterprise solutions.

Shivansh Singh is a Principal Solutions Architect at Amazon. He is passionate about driving business outcomes through innovative, cost-effective and resilient solutions, with a focus on machine learning, generative AI, and serverless technologies. He is a technical leader and strategic advisor to large-scale games, media, and entertainment customers. He has over 16 years of experience transforming businesses through technological innovations and building large-scale enterprise solutions.

Dushan Tharmal is a Principal Product Manager – Technical on the Amazons Artificial General Intelligence team, responsible for the Amazon Nova Foundation Models. He earned his bachelor’s in mathematics at the University of Waterloo and has over 10 years of technical product leadership experience across financial services and loyalty. In his spare time, he enjoys wine, hikes, and philosophy.

Dushan Tharmal is a Principal Product Manager – Technical on the Amazons Artificial General Intelligence team, responsible for the Amazon Nova Foundation Models. He earned his bachelor’s in mathematics at the University of Waterloo and has over 10 years of technical product leadership experience across financial services and loyalty. In his spare time, he enjoys wine, hikes, and philosophy.

Anupam Dewan is a Senior Solutions Architect with a passion for generative AI and its applications in real life. He and his team enable Amazon builders who build customer-facing applications using generative AI. He lives in the Seattle area, and outside of work, he loves to go hiking and enjoy nature.

Anupam Dewan is a Senior Solutions Architect with a passion for generative AI and its applications in real life. He and his team enable Amazon builders who build customer-facing applications using generative AI. He lives in the Seattle area, and outside of work, he loves to go hiking and enjoy nature.

Source: Read MoreÂ